AI is making software harder to secure

Last week was a bad one for tech companies.

Vercel got breached through a third-party AI tool with too much access. Bitwarden shipped a backdoor through a compromised dependency. At Lovable, any user could read any other user's project. And $292M left KelpDAO after a single outside verifier got compromised and signed a forged message.

In each case, the code did what it was written to do. The break happened at a trust assumption nobody had treated as critical: a permission, a build dependency, a boundary between users, a single verifier. Audits don't catch these because they live in the seams between components, and they rarely get written down.

Attackers are getting much better at finding them. At [un]prompted, Nicholas Carlini from Anthropic showed that frontier models find exploits in codebases that have been reviewed for decades. A script asking the model to find them surfaced 500 high-severity vulnerabilities. Adversarial analysis of your system is coming, and the question is whether you get there first.

Formal methods are the answer because they force the assumptions out. Writing a specification means naming every principal the system trusts and every property it has to preserve, and the hidden seams stop being hidden. Last year, Rob McHenry at DARPA argued that combining formal methods with LLMs could "virtually eliminate software vulnerabilities" in critical infrastructure. The Beneficial AI Foundation recently launched Signal Shot to formally verify Signal's Rust implementation in Lean. AWS runs automated reasoning on every S3 deployment, as Mai-Lan Tomsen Bukovec puts it: "at a certain scale, math has to save you."

This is exactly the work we've been doing at Runtime Verification for years.

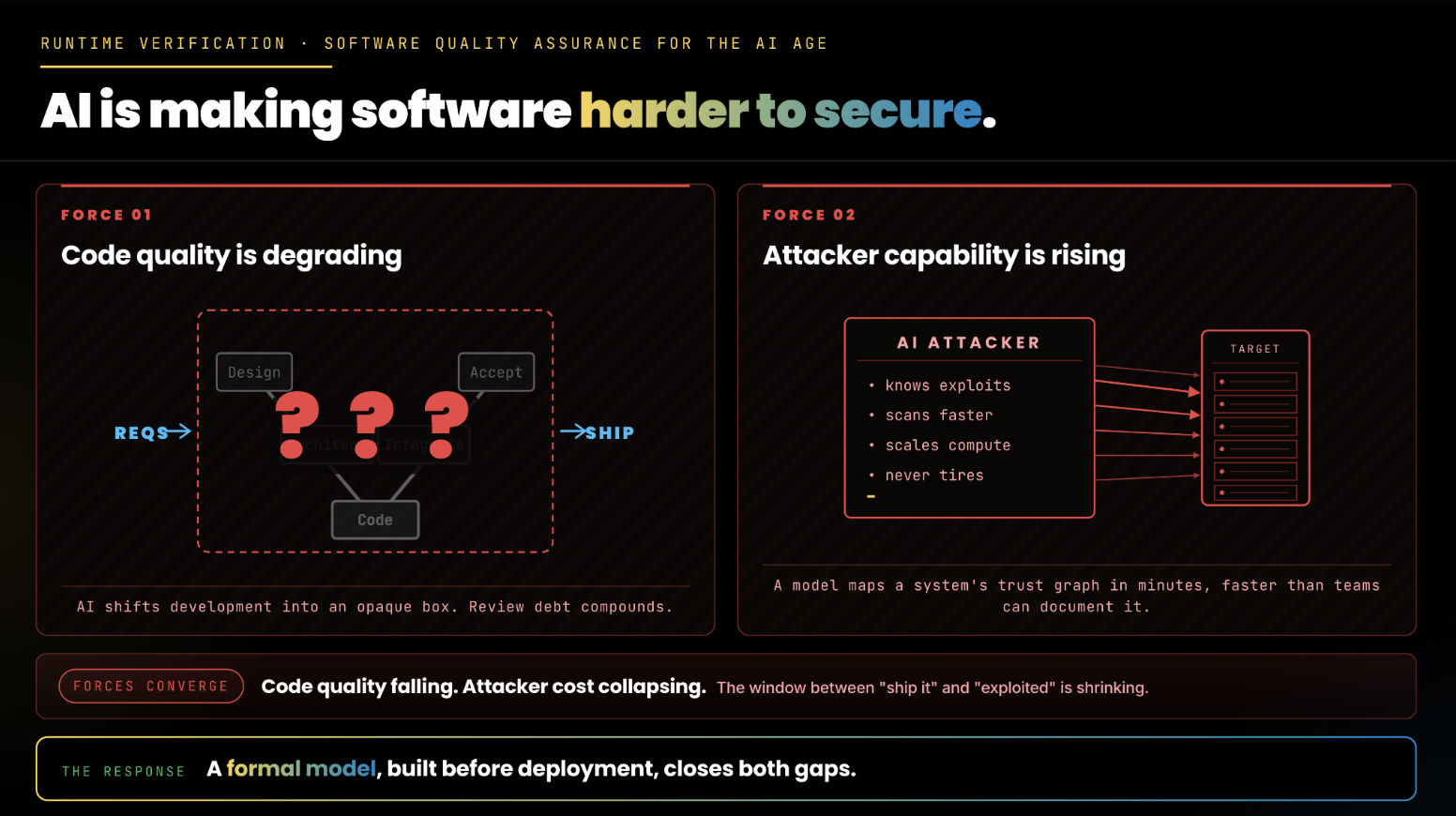

The development lifecycle is changing from both directions. AI agents ship weeks of work in hours, and they scan trust architectures for implicit assumptions in minutes. Build, audit, patch was the old development process. It assumed slow attackers, but slow attackers are no longer the relevant case.

The diagram below makes the argument visual. The window between shipping and getting exploited is shrinking, however, a formal model built before deployment closes the gap on both fronts. With a model, trust boundaries get named, engineers know which components are load-bearing, agents have something precise to reason from, and auditors arrive with a real map.

Aerospace, nuclear, and medical devices arrived here through regulation. The rest of software is arriving through economics, finding implicit assumptions just got orders of magnitude cheaper, and it got cheaper for the attacker first.

The earlier in a project's lifecycle a formal model exists, the more value it generates. Built before deployment, it becomes the context against which every process runs. If you're building software that cannot fail, get in touch at contact@runtimeverification.com.